The use of Large Language Models (LLMs) in the cloud is fundamentally changing how organizations process knowledge, analyze documents, and prepare decisions. What began as a technical experiment has become a strategic tool by 2026, in legal departments, customer service, and public administration alike.

But with the potential comes responsibility: organizations that feed sensitive data into external cloud models risk losing control over confidential information.

This article outlines the opportunities Open-Cloud LLMs offer, where the real risks lie, how the market is positioned in 2026, and what organizations can do concretely to operate AI-powered workflows securely and in compliance with applicable regulations.

Why security matters for Open-Cloud LLMs

Data Protection and compliance

As soon as a prompt reaches an external language model, the data it contains leaves the organization’s own IT infrastructure. That may sound trivial, but the consequences are far-reaching. According to EnsEcur, LLMs can reproduce sensitive information from their training data, a risk that is compounded by careless prompt design. In regulated sectors such as healthcare or financial services, the EU AI Act has imposed binding requirements on AI systems since August 2024. Violations of the GDPR or the AI Act can result not only in substantial fines but also in serious reputational damage.

Technical security vulnerabilities

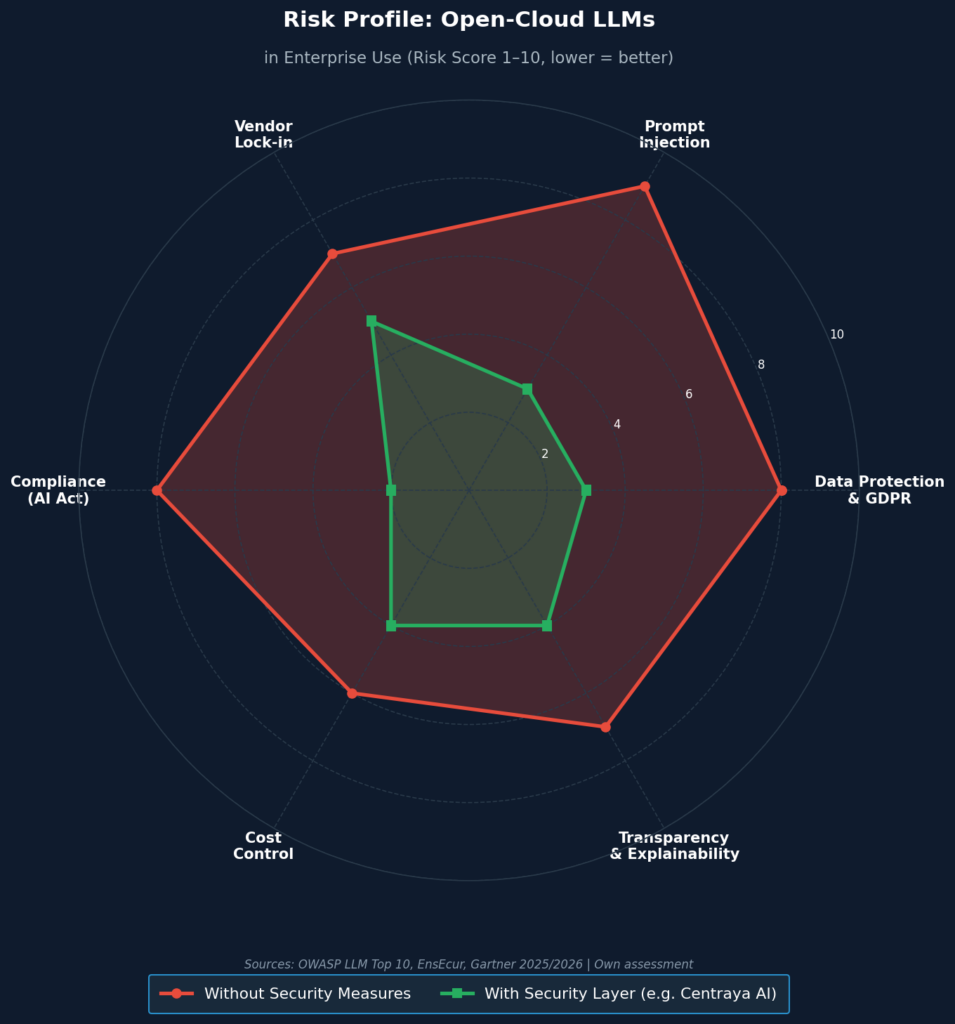

The OWASP Top 10 for LLM Security systematically document where attack surfaces emerge: prompt injection, where user inputs deliberately manipulate model behavior , tops the list. Additional risks stem from excessive model permissions, insufficient output validation, and missing access controls. Organizations that do not actively address these vulnerabilities expose themselves not only to data breaches, but risk turning AI systems into entry points for attackers. Specialized security layers offer a practical solution: Centraya AI for example, encrypts prompts before transmission, effectively decoupling the processing logic from the actual content data.

Opportunities of Open-Cloud LLMs

Scalability and cost efficiency

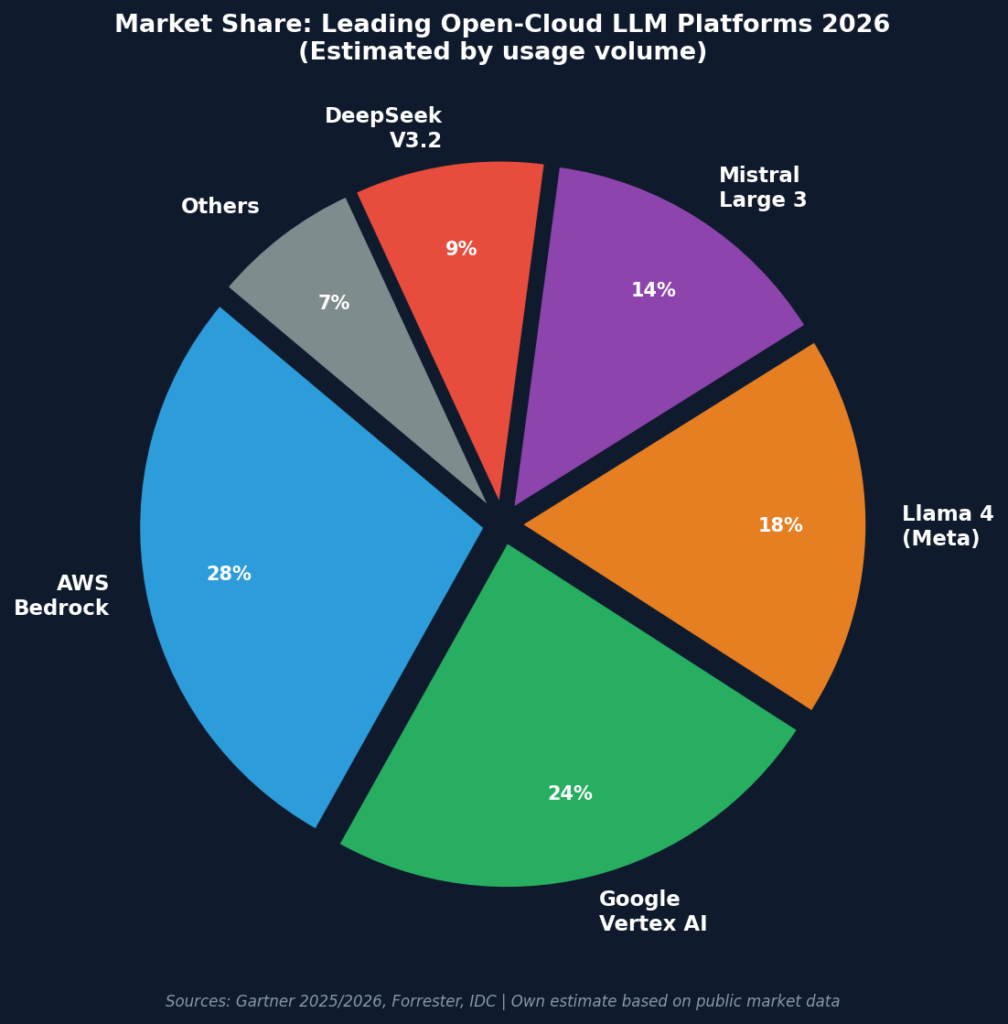

Competitive pressure on LLM providers is intense and organizations stand to benefit. Gartner forecasts that inference costs for models with one trillion parameters will fall by more than 90 percent by 2030 compared to 2025 levels. At the same time, open-source models such as Llama 4 and Mistral Large 3 are advancing at a pace that puts proprietary vendors under pressure. For small and medium-sized enterprises, this translates into access to enterprise-grade AI without enterprise-level licensing costs (provided implementation is carefully planned).

Innovation and competitive advantage

Forrester’s recent forecasts emphasize that strategic advantage does not come from using LLMs alone, but from the depth of their integration into existing processes. Organizations that have already embedded LLMs in document analysis, contract management, or knowledge management report significant efficiency gains. The real potential lies less in spectacular standalone applications than in the cumulative effect of automated routine tasks- This is an effect that compounds over months into a measurable competitive edge.

Risks and challenges of Open-Cloud LLMs

Data protection and compliance risks

The greatest operational risk when using Open-Cloud LLMs does not come from spectacular hacks, but from everyday carelessness: an employee pastes customer data into a prompt, another sends medical records to an external model for summarization. According to EnsEcur, it is precisely these gradual data losses that become liability traps in regulated environments. A robust data classification framework, defining which information may be processed by which models – is the most effective countermeasure.

Vendor lock-in

Forrester’s analyses highlight that many organizations underestimate the structural risks of single-vendor cloud dependency. Building an entire AI stack on one provider – whether AWS, Google Cloud, or Microsoft Azure – creates exposure to price adjustments, service outages, and strategic pivots by platform vendors. A well-designed multi-cloud or hybrid strategy significantly reduces this dependency while also strengthening the organization’s negotiating position with providers.

Hallucinations and quality assurance

LLMs are not knowledge databases – they are probability engines that produce coherent text without any inherent factual understanding. This leads to so-called hallucinations: plausibly worded statements that are factually wrong. In a marketing text, that may be inconvenient; in a legal brief, a medical summary, or a financial report, the consequences can be serious. Organizations deploying LLMs in critical processes therefore need mandatory human-in-the-loop review procedures, as well as technical countermeasures such as Retrieval-Augmented Generation (RAG), which anchors model responses to verified sources.

Market overview: tools for Secure Open-Cloud LLMs

Centraya AI

Among specialized security solutions for cloud LLM deployment, Centraya AI positions itself as a dedicated security and encryption proxy. The principle: before a prompt leaves the corporate network, it is encrypted and anonymized. The external model never processes the raw data, only a transformed representation. Particularly for organizations in healthcare, public administration, or financial services that must meet stringent data protection requirements, this approach offers a practical middle ground between AI adoption and regulatory compliance.

Tool comparison

| Tool | Key Feature | Use Case | Security & Compliance |

| Llama 4 (Meta) | Open-source, high performance | Text generation, chatbots | Depends on implementation |

| Mistral Large 3 | Multimodal, high accuracy | Enterprise applications | GDPR-compliant possible |

| DeepSeek V3.2 | Optimized for complex tasks | Research, development | High data security |

| Google Vertex AI | Google Cloud integration | Enterprise solutions | Strong compliance features |

| AWS Bedrock | Scalable AI solutions | Cloud-native applications | Comprehensive security controls |

SWOT analysis: Open-Cloud LLMs 2026

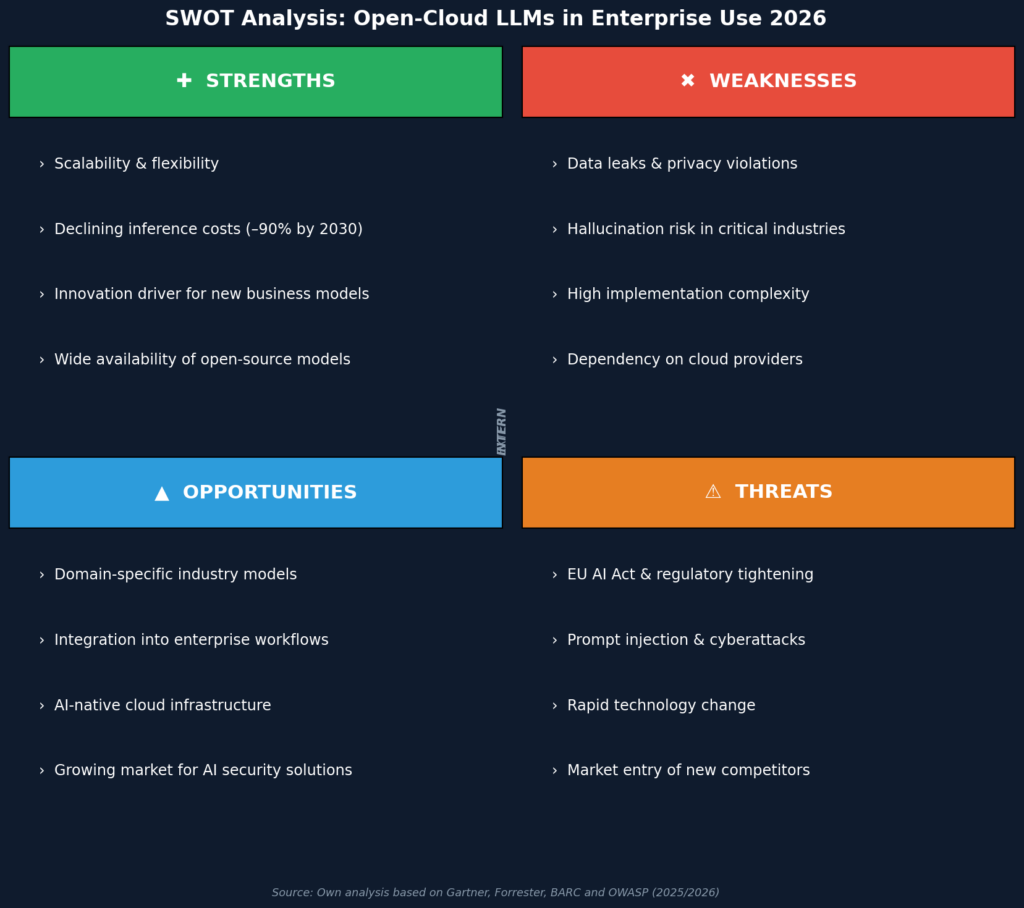

The SWOT analysis below summarizes the key strategic dimensions for decision-makers evaluating cloud LLM adoption. It takes into account both the internal organizational capabilities and the external market conditions that will shape deployment success in 2026.

Conclusion

By 2026, Open-Cloud LLMs are no longer a future topic. They are an operational reality. For organizations, the question is no longer whether to adopt them, but how to do so responsibly. The key lies in a structured approach: data protection and compliance must be built in from the start, not bolted on as an afterthought. Hybrid strategies that combine multiple providers while integrating internal control mechanisms such as prompt encryption or RAG systems offer the best balance between performance and security.

The organizational dimension is equally decisive: even the best security tool delivers little if employees do not understand which data they are permitted to enter into an AI system. Awareness programs and clear policies are therefore just as indispensable as technical safeguards. Organizations that consistently combine both will not only minimize risks – they will turn the strategic use of AI into a lasting competitive advantage.

FAQs: Open-Cloud LLMs

Which industries benefit most from Open-Cloud LLMs?

Industries with high document volumes and complex analytical requirements – above all law, medicine, and public administration – benefit most clearly. Here, LLMs can significantly accelerate routine tasks such as contract review, diagnostic summarization, or administrative document preparation. Customer service, marketing, and software development also report measurable efficiency gains once LLMs are deeply integrated into existing workflows.

How do I protect my data when using Open-Cloud LLMs?

The most effective protection starts before the prompt: organizations should establish a binding data classification framework that defines which information may be passed to external models. On the technical side, solutions such as Centraya AI complement this approach by encrypting prompts before transmission. Provider selection should consistently be evaluated against GDPR and AI Act compliance – certifications and data processing agreements are non-negotiable.

What costs are involved in using Open-Cloud LLMs?

The cost spectrum is wide: open-source models like Llama 4 are available without licensing fees, but require investment in infrastructure, operations, and security architecture. Managed enterprise services such as Google Vertex AI or AWS Bedrock charge usage-based fees, but in return offer ready-to-use compliance features. Gartner forecasts that raw inference costs will fall by more than 90 percent by 2030 – shifting the primary cost driver increasingly toward integration, quality assurance, and organizational change management.