In an unusually specific forecast, renowned research and advisory firm Gartner is warning of an impending catastrophic scenario.

By 2028, a misconfigured AI system embedded in cyber-physical infrastructure will bring critical services in a G20 nation to a standstill. The warning is directed particularly at operators of power grids, manufacturing facilities, and other systemically important installations.

What are cyber-physical systems?

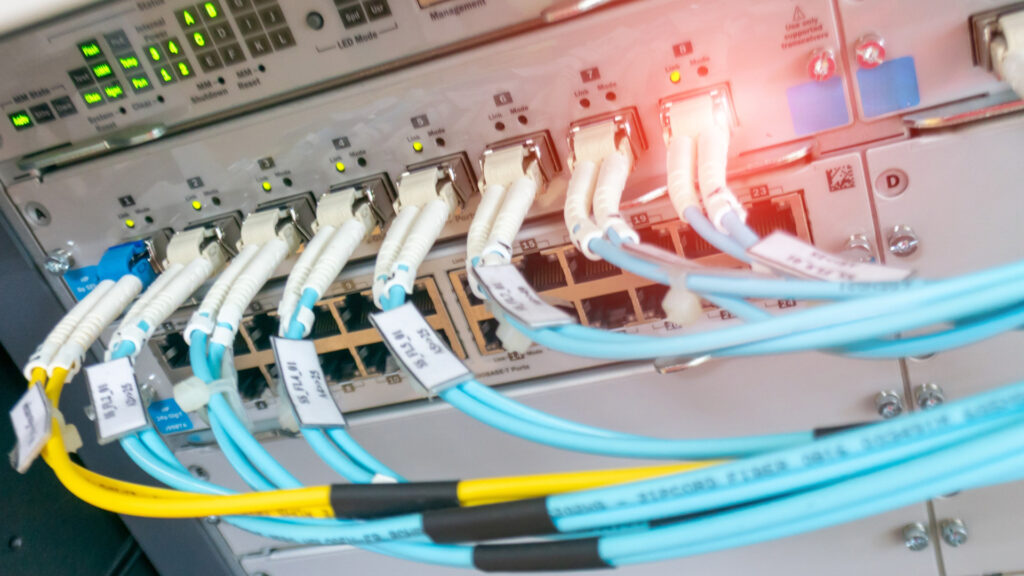

Gartner defines cyber-physical systems (CPS) as technical systems that orchestrate sensing, data processing, control, networking, and analytics to interact with the physical world. The term encompasses operational technology (OT), industrial control systems (ICS), the industrial internet of things (IIoT), robotics, drones, and Industry 4.0 applications.

The danger of well-intentioned mistakes

“The next major infrastructure outage may not be caused by hackers or natural disasters, but by a well-meaning technician, a faulty update script, or a misplaced decimal point,” warns Wam Voster, VP Analyst at Gartner. He argues that a secure emergency shutdown or override mode accessible exclusively to authorized operators is essential to protect critical infrastructure from unintended failures caused by AI misconfigurations.

Misconfigured AI systems could autonomously shut down vital services, misinterpret sensor data, or trigger unsafe actions. This could lead to physical damage or large-scale service disruptions, posing direct threats to public safety and economic stability.

Power grids particularly at risk

Modern power grids offer a concrete illustration of the risk. These systems increasingly rely on AI to balance electricity generation and consumption in real time. A misconfigured forecasting model could interpret normal demand fluctuations as signs of instability and trigger unnecessary grid shutdowns or load shedding across entire regions or even entire countries.

“Modern AI models are so complex that they often resemble black boxes,” Voster explains. Even developers cannot always predict how small configuration changes will affect a model’s emergent behavior. The more opaque these systems become, the greater the risk from misconfiguration and the more critical the ability for human intervention.

Three core recommendations

Gartner offers Chief Information Security Officers (CISOs) three concrete measures to address these risks.

First, organizations should implement secure override modes, essentially emergency shutoffs for all critical infrastructure CPS, accessible only to authorized operators. This ensures that humans retain ultimate control even in highly autonomous environments.

Second, Gartner recommends developing comprehensive digital twins of critical infrastructure systems, enabling realistic testing of updates and configuration changes before they are deployed in live environments.

Third, mandatory real-time monitoring with rollback mechanisms should be introduced for any AI changes in CPS, alongside the establishment of national AI emergency response teams capable of acting swiftly when things go wrong.

The forecast serves as a timely reminder that as AI takes on greater autonomy in managing physical systems, the consequences of getting it wrong are no longer confined to the digital realm. They can cascade into the real world with potentially devastating effect.

(lb/Gartner)